As a disclaimer, this is probably the geekiest post I have written on here yet. It’s about statistics. You have been warned.

Don’t disappear just yet. It does get better.

For a while I have been dissatisfied with mixed models and how they have been used in ecology. On the one hand they are wonderful and they allow you to deal with nasty problems like pseudoreplication. On the other hand I have always been concerned about the lack of any measure of goodness of fit. Many papers now use mixed models without reporting something like an R2 statistic and so we have little idea how good (or bad) these models are at describing the patterns you are looking at. R2 statistics are important (as Jeremy Fox (sorry I misatributed credit here – thanks to Nick Golding on Twitter for the correction) Brian McGill has pointed out at the ever excellent dynamic ecology blog) and if our R2 is small we may well be barking up the wrong tree when we’re looking to explain how our system works. In his blog post Jeremy Brian points out research that suggests ecologists work tends to have an R2 of 0.02-0.05. We should be ashamed of this.

Anyway, papers with mixed models often report AIC values which as a means of model selection are really useful. However, they don’t inherently tell you much about the fit of your model. This means that for a whole swathe of new papers we have little idea how good the fit of models really is.

This is where a new paper by Shinichi Nakagawa and Holger Schielzeth published in Methods in Ecology and Evolution comes in.

They have come up with R2 equivalents for mixed models. And I love them for it.

These new methods allow us to calculate the R2 values for fixed effects in our models and fixed effects and random effects combined. Being statistical types they gave them catchy names like R2GLMM(m) and R2GLMM(c). Even though the names are clunky, if you are an ecologist (or even non-ecologist) that uses mixed models you must read this paper. It includes good worked examples and importantly an R script which will allow you to calculate the statistic (find this in the supplementary bit which you can access for free, I have included it below as well but please go and read the paper).

I hope that this will lead to papers which use mixed models to calculate their goodness of fit more regularly so we can assess how good people’s models actually are. I for one will certainly be using it.

Nice post! Hadn’t seen that paper but it looks great.

Thanks, that was my aim really. You can tell because it’s such a badly written blog post this week! The more people that know about this the better as far as I am concerned.

I like their idea…but at the same time I’m always left with feeling like a mixed model is just a lazy man’s Bayesian model. Why not just build a real hierarchical model and look at how much variance was explained at each level in the model?

I don’t know enough about bayesian stuff to comment really. Given its increasing popularity there must be call for a good introduction course or textbook to bayesian stats for ecologists. Do you know of any? The only courses I have been to actually made things worse due to poor teaching…

There are a few excellent resources for ecologists interested in learning Bayesian basics. My favorite is Marc Kery’s “Introduction to WinBUGS for Ecologists”. Michael McCarthy also has a very nice book, “Bayesian Methods for Ecology.” Both books are excellent, very basic introductions. They are well formatted and well written. They are slightly focused on population ecology. For a more technical and thorough reading, James Clark has great books including “Models for Ecological Data: An Introduction”.

Thanks for your response Daniel. I have been looking at investing at the ‘Bayesian Methods for Ecology’ book and I might get it soon. I really need a gentle introduction as it is something completely new to me.

Why not just extract the deviance of the NULL model (i.e. included the random effects but no fixed effect except the intercept), and then compute the percentage of deviance explained by the fitted model? This gives a sound and easily interpreted indication of structural goodness of fit, and is analogous to R2 anyway. I’ve done this in my papers using mixed effects models for years now.

Good idea, since it is the additional variance explained by the fixed effects that we are largely concerned with. I have just looked up a couple of your papers and might follow this approach in the work I am currently doing since its implementation is a bit simpler than that in the paper I linked to. Thanks for the tip.

Also, while you might have been doing this for years I often see papers presenting mixed models which do not have any measure of goodness of fit. Reviewers should be asking for things like this to be presented in papers, but for some reason it doesn’t seem to be very common.

Hello Barry, Could you please post the title (or link) to a couple of your papers in which you used this method? Thank you!

Barry or Philip, I need some clarifications with this method please. I apologize in advance if my question has an obvious answer which I am not grasping. By “computing the percentage of deviance explained by the fitted model”, do you mean computing the value below?

deviance of the fitted model/(deviance of the fitted model+deviance of the null model)

If not, could you please clarify the exact formula that should be used to get the “deviance explained by the fitted model”? I would really appreciate your help.

Hi Niloo, this blog post might help – https://ecologyforacrowdedplanet.wordpress.com/2013/08/27/r-squared-in-mixed-models-the-easy-way/

I think I found a built-in function to compute the difference suggested by Barry:

r2.corr.mer <- function(m) {

lmfit <- lm(model.response(model.frame(m)) ~ fitted(m))

summary(lmfit)$r.squared

}

The first figure in the output is R^2 for fixed effects only, and the second is for fixed plus random effs.

[http://stats.stackexchange.com/questions/95054/how-to-get-an-overall-p-value-and-effect-size-for-a-categorical-factor-in-a-mi/240092#240092]

How does one set out to report the R2GLMM in a written analysis summary? Do you report a p value too?

R2 doesn’t have a p value associated with it. I always report my mixed model results as a list of possible models along with their associated AIC/AICc and some measure of deviance explained. Does that help?

Nice post, Philip. I just used the code from the paper to produce R2 in a wide range of lmer() models. Lo and behold, some of the models I had painstakingly selected by AIC and BIC were alright, others were abysmal. I would have never known. lmer() doesn’t even have a predict method implemented as the authors think that’s also questionable. So for any sort of evaluation, one has to figure out predictions oneself with the coefficients and design matrix as Nakagawa and Schielzeth did.

BTW in response to Loz’s post and while I’m not a fan of p-values, there is a method to get p-values out of lmer() models that, as far as I know, has not been condemned by the powers that be, yet. It is based on MCMC sampling and is in library(“languageR”). The function is pvals.fnc()

For anyone who is not able to open the link of the paper:

Click to access 9c9605157c864f08d5.pdf

I have that small world feeling Phil! Came across your blog by chance this morning having googled ‘R2 for lmer mixed models’. Even weirder as I met Shinichi on Friday when he gave a guest seminar on meta-analysis here in Edinburgh. This is a helpful post and a good pointer towards the paper, and I will be sure to follow your blog more closely from now on.

Hi Frazer! Long time no see! Are you INTECOl-ing this year? Would be good to hook up for a drink.

Glad you found it useful, I will try to keep things interesting on here!

Howdy Philip (and readers). Here’s a link to a page about quantifying uncertainty in R-squared for GLMMs using MCMC sampling. Might be useful for model comparison: http://mbjoseph.github.io/blog/2013/08/22/r2/

Thanks for that. Very useful!

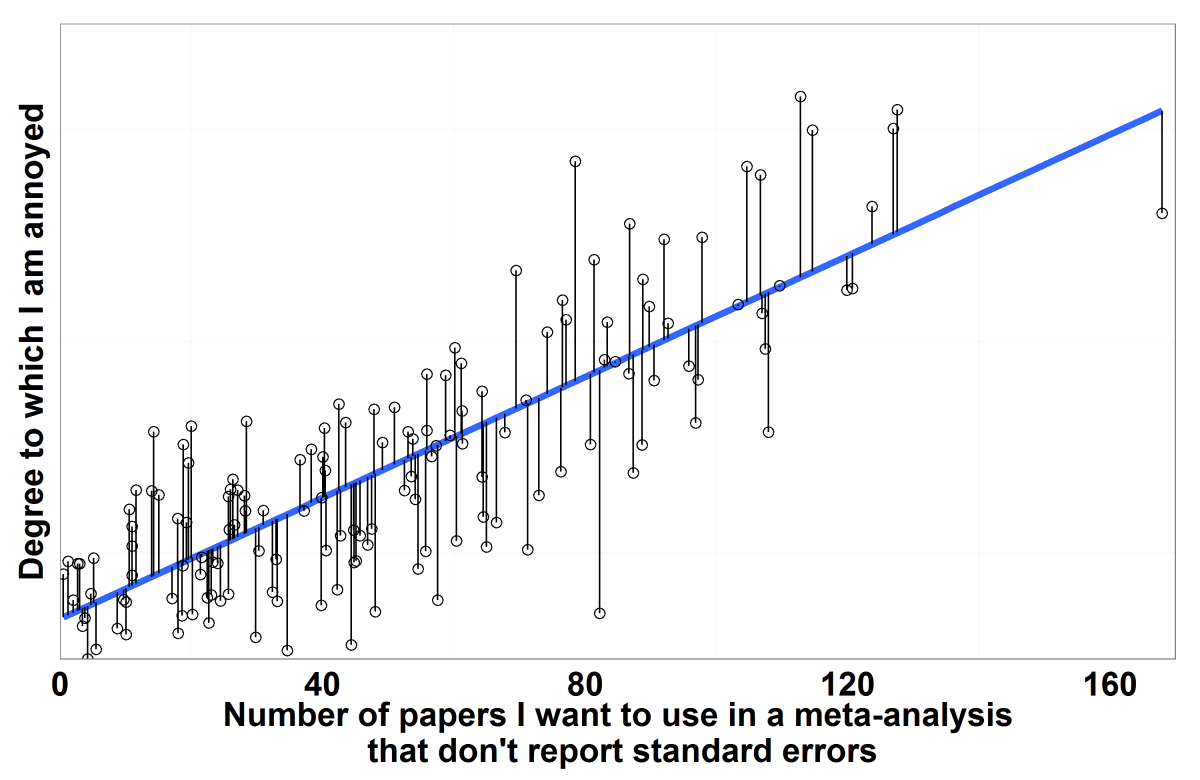

Thanks for this blog – I did not know about this till I saw a blog in Ecology in silico. I will pass this blog onto Holger. Also, about missing SE for meta-analysis, have you thought about data imputation? Or using SD from similar studies?

I have tried imputation and for the study that I am doing at the moment, it looks like that is what I will use. It seems a better solution than missing out entire datasets that might be useful.

Good luck! If you are interested in what I wrote recently on this topic – just email me. Best wishes – Shinichi

Thanks! I am just now reading through a couple of your papers – Model averaging, missing data and multiple imputation: a case study for behavioural ecology and Methodological issues and advances in biological meta-analysis . Let me know if you have any pointers on similar useful papers. I will send you an email soon if I still need help.

Hi Phil (and Shinichi). Any ideas how the script could be adapted for MCMCglmm models? Dodgy Sierra Leonian internet is making it hard to search for solutions so I’m currently stuck at trying to return the fixed effect design matrix…

No idea at the moment. I will have a look later on when I get some spare time. If you find anything in the mean time, post it up here.

Hey Frazer. I didn’t find anything I’m afraid. I have never used MCMC for any models. Have you had any luck finding info elsewhere?

Hi Frazer,

I wonder if you ever managed to adapt this for MCMCglmm models. I am having real trouble understand how to extract the variance of the fixed effects. I believe in MCMCglmm it is easy to extract the variance associated with a random effect (it is output), but not for the fixed effects….

Jo

Hi Frazer – any luck figuring out how to calculate the R^2 from an MCMCglmm model?

Rob G (your brother-in-law’s brother…)

Hi Philip, sorry to post this on your blog but I thought you (or another reader) might be able to help me. I would like to calculate R2 for a binomial GLMM however I seem unable to accurately calculate the variance components for the fixed terms. Using the example data from this paper (example 2) and predicted fitted values, I obtain a variance component of 0.0138 for the fixed terms whereas they obtained 0.371.

I am using Genstat rather than R however I obtained the same results as their example for the normal GLMM. I would like to understand where the error in my method lies rather than just use the scripts in R. Thanks for any suggestions!

Difficult to say uless I see your code. I have never used genstat so can’t really give you much advice, apart from recommending a change to R. If you find a solution, feel free to post it up here.

Hi,

Sorry for using your blog as a means to get an answer to my question.

I have been trying to obtain a R^2 value for my mixed effect model using the example from this paper. Unfortunately, I cannot seem to get the R code to work on my data and I feel as if I missing something vitally obvious.

I keep getting an error when I include the function t(mF@X) from the code “VarF <- var(as.vector(fixef(mF) %*% t(mF@X)))"

While I get that mF is the name of the model applied, what does the t and X term mean? I assume that t = no. of parameters, but I cannot seem to figure out what X is from the code or the paper.

I apologies if this is a really dumb question, but I am really new to R (I switched recently from SPSS) and I am still learning the coding.

This blog post might help you: https://ecologyforacrowdedplanet.wordpress.com/2013/08/27/r-squared-in-mixed-models-the-easy-way/

It should be easier than using the code provided here!

Thank you so much!

Hi ,

I am running following model using MCMCglmm package in R,

library(MCMCglmm)

m2.1=MCMCglmm(DependentVariable~Independent1+Ind2+Ind3………+Indn,data=data,prior=m2prior)

Unlike other packages it only gives us Mean, Std Deviation and quantiles as output.

However to check model validity i need parameters like P Value, Tstat, VIF(variance inflation factor) and Rsquared.

Can you please help me with any way to get these parameter in MCMCglmm.

Thanks

Sandeep Kaushik

Sandeep1.kaushik@gmail.com

Hi Sandeep. I will be honest and tell you that I know nothing at all about MCMCglmm so can’t help.

thanks for this great post Philip